CHAPTER 10

Next Steps

By now, you understand most of the nuances of Istio and its ability to offload the east-west network traffic concerns of applications to the platform. With widespread adoption of service mesh, we now need to address challenges with respect to performance, interoperability, and cross-cluster deployment. Let’s explore some of these concepts succinctly to continue our learning journey beyond this book.

Service Mesh Interface

Popular service mesh implementations such as Istio, Linkerd, Kong, and Cilium expose their own APIs that are unique to the implementation. Custom APIs lead to vendors building tooling for a handful of popular service meshes at a cost to other offerings. Moreover, customers who deploy an implementation of the service mesh to their infrastructure lose the ability to migrate to another implementation without incurring huge costs, which leads to vendor lock-in.

Companies like Microsoft, Pivotal, Red Hat, Linkerd, and many others recognized the challenges of different service mesh APIs and pioneered building a baseline of common APIs for service mesh that can be implemented by different providers. A common API will bring standardization to customers and space for innovation to providers and tooling vendors.

Service Mesh Interface (SMI) specification consists of four API objects, with each object defined as a Kubernetes CRD. The following are the objects declared in the SMI spec:

- Traffic spec: The types defined in this spec control the shape of traffic based on the protocol (HTTP and TCP). These resources work with the access control spec to control traffic at a protocol level. The two types defined in this spec are HTTPRouteGroup and TCPRoute.

- Traffic access control spec: The types in this spec restrict the audience of the services on the mesh. By default, the spec dictates that no traffic can reach any service, and access control is used to explicitly grant access to any service. This spec only controls request authorization, leaving the authentication aspect to the implementation, such as Istio. The only type defined in this spec is TrafficTarget, which defines the source, destination, and route of the traffic.

- TrafficSplit spec: This type determines the amount of traffic that should land at a version of a service on a per-client basis. Declaring a traffic split requires three elements: root service, which is the point of origin of traffic; backend service, which is a subset of root service; and weights, which is the ratio in which the traffic should be split between the various backend services.

- TrafficMetrics spec: This spec defines the types that surface metrics related to HTTP traffic from the service mesh. These metrics can be consumed by tools such as CLI, HPA scalers, automated canary propagation, and visualization.

To support easy adoption, the community is working on creating adapters known as SMI adapters that form a bridge between SMI specs and the underlying platform. Istio already has an adapter that deploys as another CRD in the cluster in the istio-system namespace. The adapter regularly polls SMI objects and creates Istio configurations from the objects. The following is a simple example that shows the difference between specifications of traffic split using SMI and using virtual service in Istio.

Code Listing 153: SMI traffic split specification

# SMI apiVersion: split.smi-spec.io/v1alpha1 kind: TrafficSplit metadata: name: split-sample spec: service: web backends: - service: web-v1 weight: 100 - service: web-v2 weight: 900 |

The following is the same policy specified using the native Istio configuration model.

Code Listing 154: Istio traffic split specification

apiVersion: networking.istio.io/v1alpha3 kind: VirtualService metadata: name: split-sample spec: http: - route: - destination: host: web subset: v1 weight: 10 - destination: host: web subset: v2 weight: 90 |

SMI enables developers to experiment with multiple service meshes without making any changes to the application. As more and more service mesh implementations onboard SMI, customers will have the flexibility to use a unified API, and the tooling vendors will be able to use their existing investments across all the service meshes.

Knative

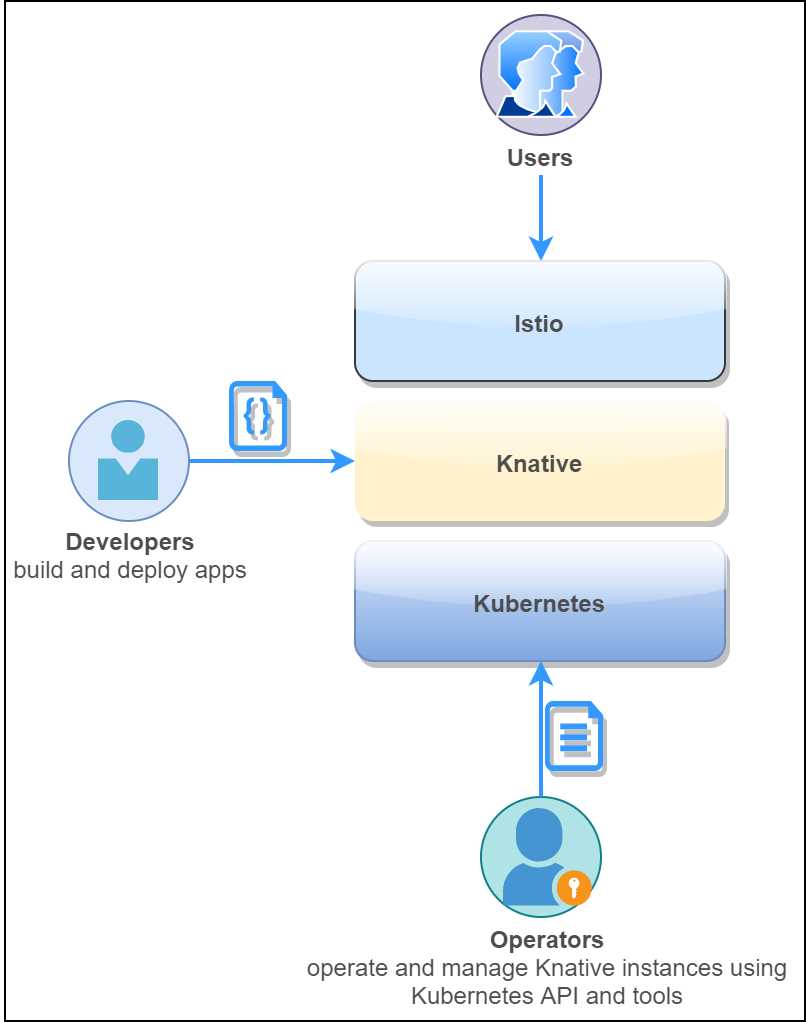

Knative is a serverless framework from Google, Pivotal, and other industry players to build serverless-style functions in Kubernetes. Knative is built upon Istio and Kubernetes, which provide it a runtime environment and advanced networking capabilities, respectively. Knative provides Kubernetes Custom Resource Definitions (CRDs), from which you can create custom objects to provision serverless applications in a cluster. The following diagram shows the various personas involved in delivering a serverless application with Knative.

Figure 25: Knative personas

Knative consists of three primary components (Build, Serve, and Event) that are used to deliver serverless applications. Each of the components are installed using a CRD in Kubernetes. Let’s take a brief look at these components.

Build

The Build spec defines how the application code can be packaged in a container from its source code. This spec is useful if you are using the Google container building service. This component is optional if you are using CI tools such as Azure DevOps, Jenkins, and Chef to generate a container image. The image generated through this specification is pushed to a container registry, and then used in subsequent Knative CRD specifications.

Serve

The Knative service template extends Kubernetes to support the deployment and execution of serverless workloads. It supports scaling of application instances all the way down to zero. If requests arrive after the application has been scaled down to zero, then the requests are queued and Knative starts scaling out application instances to process the pending requests. The asynchronous nature of processing makes Knative unsuitable as a host of backend services for web applications, but suitable for hosting batch jobs and event-driven jobs.

Code Listing 155: Service specification

apiVersion: serving.knative.dev/v1alpha1 kind: Service metadata: name: fruits-api-svc namespace: default spec: runLatest: configuration: revisionTemplate: spec: container: image: istiosuccinctly/fruits-api:1.0.0 |

The previous specification will deploy the fruits API service as a serverless service, which will scale out and down as per the number of requests made to the service.

Events

Event provides a way for the services to produce and consume events. The events can be supplied by any pluggable event source, and the events produced by serverless services can be delivered through various pub/sub-broker services. Several event sources such as Kafka, Container, AWS SQS, and Kubernetes events are already supported by Knative.

Finally, serverless functions built for popular serverless managed services like AWS Lambda and Azure Functions already support containerization, and therefore, they can be easily migrated to Knative.

Istio performance

We have discussed how Istio abstracts network concerns from the application without impacting the application code. However, the data plane components and control plane components of Istio have performance implications, and they require a different mitigation strategy for each component. We will discuss the performance of the individual components of Istio next.

Control plane performance

The control plane of Istio manages services, virtual services, and other objects. The management overhead of the services increases with the number of services on the mesh. As a result, the CPU and memory requirements of Pilot (one of the control plane components) is directly proportional to the service configurations. The CPU consumption of Pilot depends upon the following factors:

- The rate of deployment changes.

- The rate of configuration changes.

- The total number of services deployed in the mesh.

In a cluster where Istio is deployed with namespace isolation, a single instance of Pilot can handle approximately 1,000 services and 2,000 sidecars with just one vCPU and 1.5 GB of memory. The performance of Pilot can be directly affected by scaling it out, which will reduce the time required for applying configuration changes in the mesh.

Data plane performance

We know that the data plane of Istio intercepts every request in the mesh, and it also takes care of networking concerns such as service discovery, routing, and load balancing. The networking features of Envoy directly affect its performance. For example, if the number of requests sent to the services in a mesh is high, then it will degrade the performance of the data plane. Similarly, factors such as the size of the request or response, the protocol used for the requests, and active client connections within the mesh affect the performance of the data plane. The operators of the mesh are required to balance the performance of the data plane with the expected volume of traffic to the services on the mesh.

We know that the sidecar proxy operates on the data path of the request, which is where it consumes CPU and memory. The total resource utilization of the proxy is dependent on the number of resources that you configure in it. For example, if you provision many listeners, policies, and routes, then the provisioned resources will increase the memory required by the proxy. Since Envoy does not buffer request data, the rate of requests does not affect the memory consumption of the proxy.

In Istio version 1.1, the concept of namespace isolation was introduced, which you can use to configure the CPU and memory quota at the namespace level. The namespace isolation feature is extremely useful for namespaces with many services since a proxy sharing the namespace with other services may eat into the quota of that namespace.

Mixer policies such as authentication and filters can also add to the latency in responses from Envoy, as these policies are evaluated (or looked up) for each request. Another function of Mixer is to aggregate telemetry, for which the sidecar proxy spends some time collecting telemetry from each request. During the time of telemetry collection, Envoy does not process another request, which adds to the request latency. Therefore, telemetry should only be configured for required values so that Envoy does not spend additional time aggregating unnecessary logs.

Multi-cluster mesh

A multi-cluster mesh spans many clusters, but it is administered through a single console. It can be implemented as meshes with a single control plane or multiple control planes. In a multi-cluster service mesh, two services with the same name and namespace in different clusters are considered the same.

Multi-cluster service meshes abstract the physical location of services from the consumers, which ensures that services will be available to the client even if a cluster stops functioning. There are two approaches to implementing a multi-cluster service mesh:

- Provision a single Istio control plane that can access and configure services in all the clusters. This approach is beneficial for staging and/or production environments where secondary clusters may be used for canary releases or act as a backup for disaster recovery.

- Provision multiple Istio control planes with replicated services and routing configurations. In this setup, the ingress gateways are responsible for establishing communication between clusters. The DNS configurations of Istio control planes manage the communication between services across the clusters.

You can read more about multi-cluster service mesh here.

Summary

This concludes our journey of learning Istio. In this chapter, we discussed the service mesh standardization initiative called Service Mesh Interface (SMI). We also discussed how another upcoming project, named Knative, can help you build serverless applications on Istio and Kubernetes. We proceeded to discuss some of the important factors that affect the performance of Istio and discussed some mitigation strategies for them. Finally, we touched upon the subject of multi-cluster mesh deployments, which is an area that you should explore for building highly available Istio meshes.

We hope that you enjoyed learning Istio with us, and that we were able to ignite in you the desire to explore Istio. Thank you for being with us on this learning journey.

- 1800+ high-performance UI components.

- Includes popular controls such as Grid, Chart, Scheduler, and more.

- 24x5 unlimited support by developers.